Content-based Image Annotation and Retrieval CVPR 2010 (oral), ECCV 2012

Abstract (of my ECCV paper)

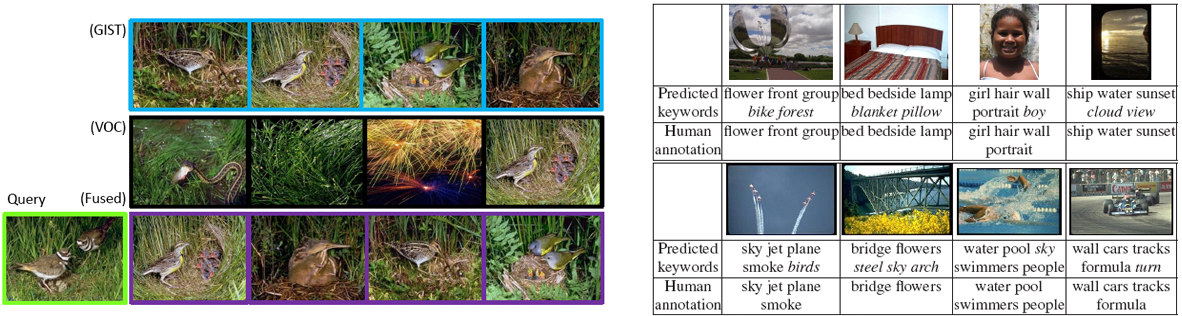

Recent image retrieval algorithms based on local features indexed by a vocabulary tree and holistic features indexed by compact hashing codes both demonstrate excellent scalability. However, their retrieval precision may vary dramatically among queries. This motivates us to investigate how to fuse the ordered retrieval sets given by multiple retrieval methods, to further enhance the retrieval precision. Thus, we propose a graph-based query specific fusion approach where multiple retrieval sets are merged and reranked by conducting a link analysis on a fused graph. The retrieval quality of an individual method is measured by the consistency of the top candidates' nearest neighborhoods. Hence, the proposed method is capable of adaptively integrating the strengths of the retrieval methods using local or holistic features for different queries without any supervision. Extensive experiments demonstrate competitive performances on 4 public datasets, i.e., the UKbench, Corel-5K, Holidays and San Francisco Landmarks datasets.

Downloads

BibTeX

@inproceedings{zhang_CVPR10_annotation,

title={Automatic image annotation using group sparsity},

author={Zhang, Shaoting and Huang, Junzhou and Huang, Yuchi and Yu, Yang and Li, Hongsheng and Metaxas, Dimitris N},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition},

pages={3312--3319},

year={2010},

organization={IEEE}

}

@ARTICLE{Zhang_Graph_Fusion,

author={Zhang, S. and Yang, M. and Cour, T. and Yu, K. and Metaxas, D.N.},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

title={Query Specific Rank Fusion for Image Retrieval},

year={2015},

month={April},

volume={37},

number={4},

pages={803-815},

}

Relevant publications

Shaoting Zhang, Ming Yang, Timothee Cour, Kai Yu, Dimitris Metaxas: Query Specific Rank Fusion for Image Retrieval. IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)

Shaoting Zhang, Junzhou Huang, Hongsheng Li, Dimitris N. Metaxas: Automatic Image Annotation and Retrieval Using Group Sparsity. IEEE Transactions on Systems, Man, and Cybernetics, Part B 42(3): 838-849 (2012)

Shaoting Zhang, Ming Yang, Timothee Cour, Kai Yu, Dimitris N. Metaxas: Query Specific Fusion for Image Retrieval. ECCV (2) 2012: 660-673 (an online demo for the San Francisco dataset, around two millions of images)

Shaoting Zhang, Junzhou Huang, Yuchi Huang, Yang Yu, Hongsheng Li, Dimitris N. Metaxas: Automatic image annotation using group sparsity. CVPR 2010: 3312-3319 (oral presentation)

Yuchi Huang, Qingshan Liu, Shaoting Zhang, Dimitris N. Metaxas: Image retrieval via probabilistic hypergraph ranking. CVPR 2010: 3376-3383